MX Performance Comparison #2: Exhaustive Ring Perception in MX and CDK

Benchmarking can be a useful first step in optimizing the performance of software. Recently a group of developers including myself began creating an open suite of benchmarks for cheminformatics. Currently, two open source cheminformatics toolkits are included: MX and CDK.

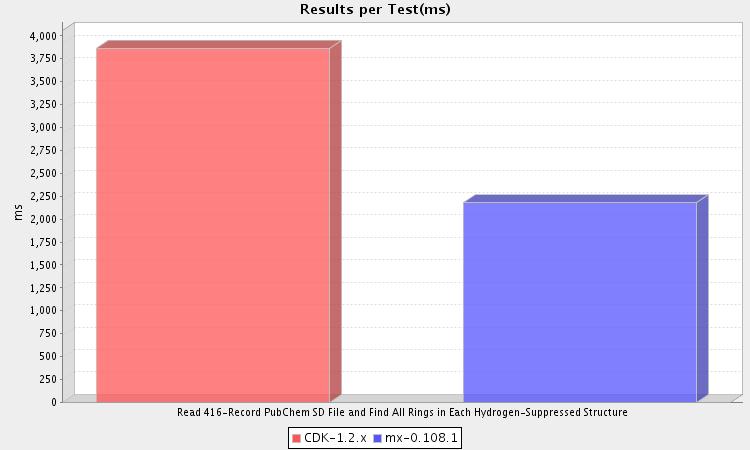

Ring perception is the foundation of many cheminformatics algorithms, so performance is an important issue. How do MX and CDK compare? See for yourself:

This benchmark finds all rings in a collection of 416 substituted benzenes created from a PubChem query. Timing starts after an in-memory collection of hydrogen-suppressed molecules has been created to avoid differences in IO performance. As you can see, MX is about 44% faster than CDK. Both toolkits find the same number of total rings in the dataset (2,179).

To run the benchmark yourself, use the GitHub repository.

One anecdotal observation: The number of iterations (10 warmup, 5 test) is lower than usual because CDK appeared to run slower and slower with each iteration. By the time 18 iterations had been made, my system was at a standstill. The cause is not clear. The setup as run avoids this behavior.

Both CDK and MX implement the Hanser Algorithm, although even a quick glance at the respective sources will reveal big differences in implementation. The MX implementation was optimized for readability and correctness, but not performance. As such, there may be some low hanging fruit to be had from the simplest of optimizations.

For more details, see the full report.